The field of neuromorphic computing represents a paradigm shift in how we think about processing power, with potential applications across a wide range of industries. This cutting-edge approach to computing mimics the human brain’s structure and functionality, offering unprecedented efficiencies and capabilities that could revolutionize how we interact with technology.

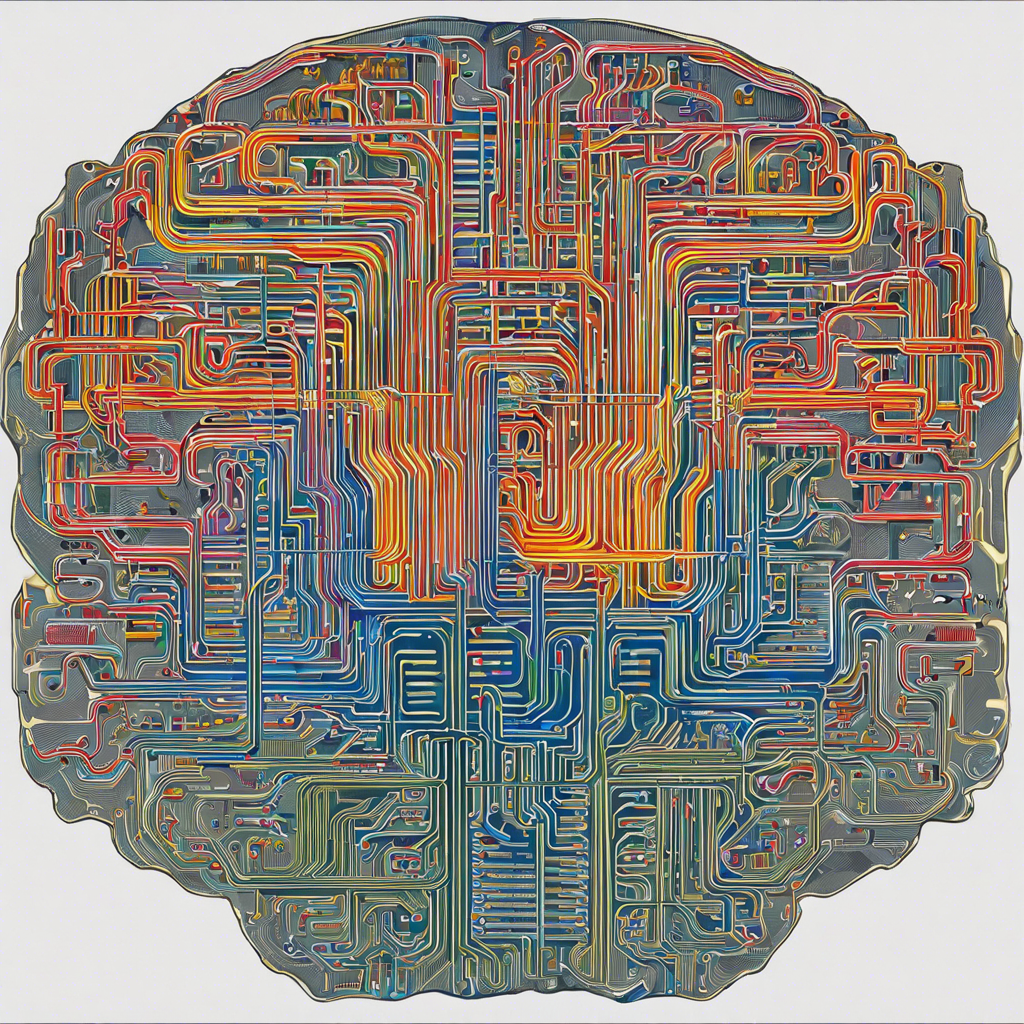

At its core, neuromorphic computing involves creating artificial neural networks that emulate the human brain’s intricate web of neurons and synapses. These neural networks are integrated onto silicon chips, resulting in a form of computational power that is strikingly similar to biological brains. One of the key advantages of neuromorphic computing lies in its energy efficiency. By imitating the brain’s parallel processing abilities, this technology can perform complex tasks while consuming significantly less power than traditional computers. This makes it ideal for mobile devices and embedded systems where power efficiency is crucial.

Moreover, neuromorphic computing has the potential to excel in areas where conventional computers struggle, such as pattern recognition, data classification, and adaptive learning. It can process and interpret sensory data in a more human-like manner, making it particularly useful for applications in image and speech recognition, natural language processing, and even autonomous robotics. Envision a robot that can seamlessly navigate and interpret its surroundings, or a self-driving car that can anticipate and react to potential hazards with almost human-like reflexes.

Another significant benefit of neuromorphic computing is its fault tolerance. Much like our brains can continue functioning even with some damaged neurons, neuromorphic systems can tolerate certain levels of component failure without experiencing a complete breakdown. This enhances the overall reliability and resilience of the technology, making it well-suited for critical applications where failures can have significant consequences.

The potential impact of neuromorphic computing extends beyond just technological advancements. By mimicking the human brain, researchers gain a deeper understanding of its complex functioning, leading to breakthroughs in neuroscience and cognitive science. This technology could also revolutionize healthcare, with applications in brain-computer interfaces, advanced prosthetics, and improved diagnostics and treatments for neurological disorders. The possibilities are endless.

Despite the immense promise of neuromorphic computing, it is still a nascent field with challenges to overcome. One significant hurdle is the complexity of designing and manufacturing these specialized chips, which require a deep understanding of both neuroscience and semiconductor engineering. Additionally, programming and training these neural networks also present a unique set of challenges, demanding new approaches and algorithms that can effectively map and harness their potential.

The race is on to commercialize this technology, with major technology companies and startups investing significant resources. IBM, for instance, has developed its TrueNorth neuromorphic chip, while Intel is exploring similar concepts with its Loihi research project. In academia, the Human Brain Project, a large-scale European research initiative, aims to further our understanding of the brain and develop revolutionary neuromorphic technologies.

As we continue to push the boundaries of what is possible with artificial intelligence and computing power, neuromorphic computing offers a glimpse of a future where machines and humans coexist in a more seamless and intuitive way. It may still be years before we fully realize the potential of this technology, but the journey towards mimicking the human brain in silicon is an exciting one that promises to redefine how we interact with the world around us.

Get ready to witness a future where machines and humans converge, thanks to the transformative power of neuromorphic computing.